One iPhone recording.

Robot picks up the mug.

Real iPhone 15 Pro recording → HaMeR hand extraction → trajectory retargeting → Franka Panda grasps and lifts 18.1cm.

Real recording → robot grasp

Hand trajectory drives approach. Expert funnel handles final contact. 18.1cm clean lift.

Validation replay

Same recording, different controller. +17.2cm lift confirms usable grasp signals.

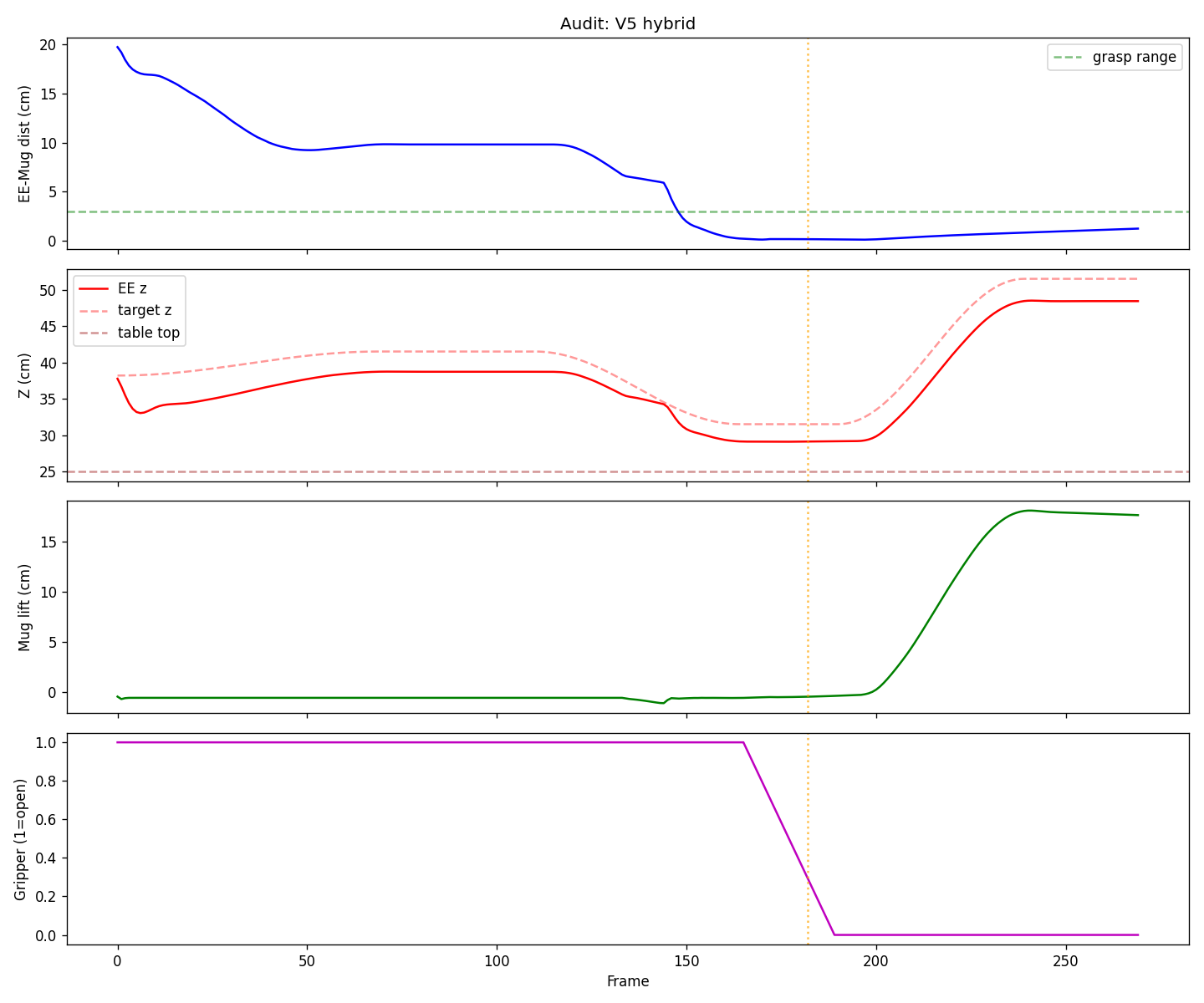

End-effector distance, height, mug lift, and gripper state over 270 frames

5 iterations in 1 day · Feb 28 2026 · GroundingDINO + HaMeR on Modal · MuJoCo Panda